3D Projecting JunoCam images back onto Jupiter using SPICE |

3D Projecting JunoCam images back onto Jupiter using SPICE |

Dec 12 2017, 02:50 PM Dec 12 2017, 02:50 PM

Post

#1

|

|

|

Newbie  Group: Members Posts: 16 Joined: 4-September 16 Member No.: 8038 |

Hi Everyone,

As part of an upcoming VR/VFX-based project, I'm attempting to project the raw images from JunoCam back onto a 3D model of Jupiter, the goal being to do this precisely enough that multiple images from a specific perijove can be viewed on the same model. However, Im currently having one or two issues calculating the correct JunoCam rotation values, and was hoping someone with more experience might be able to point me in the right direction! My process for processing a single raw image is as follows :

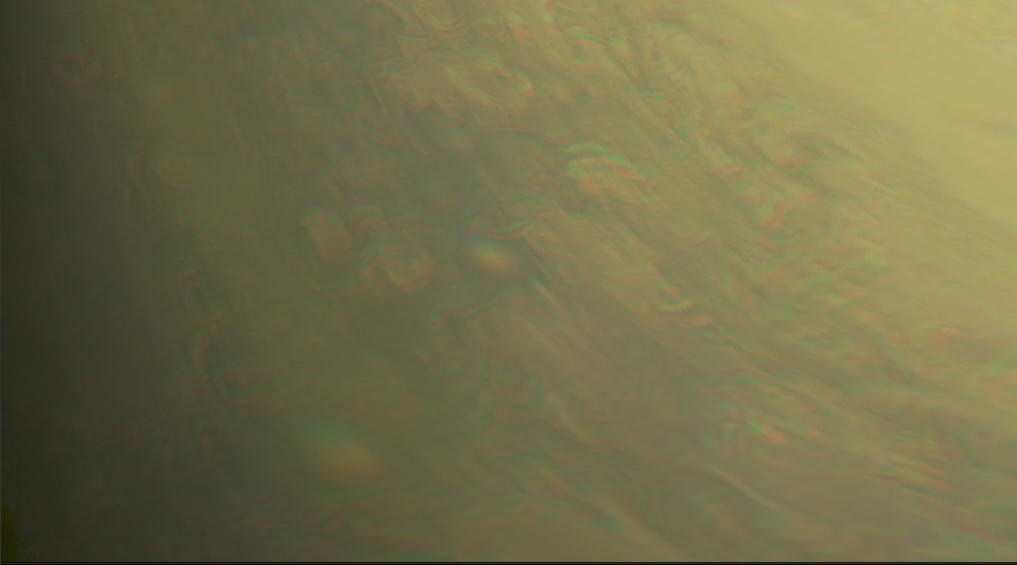

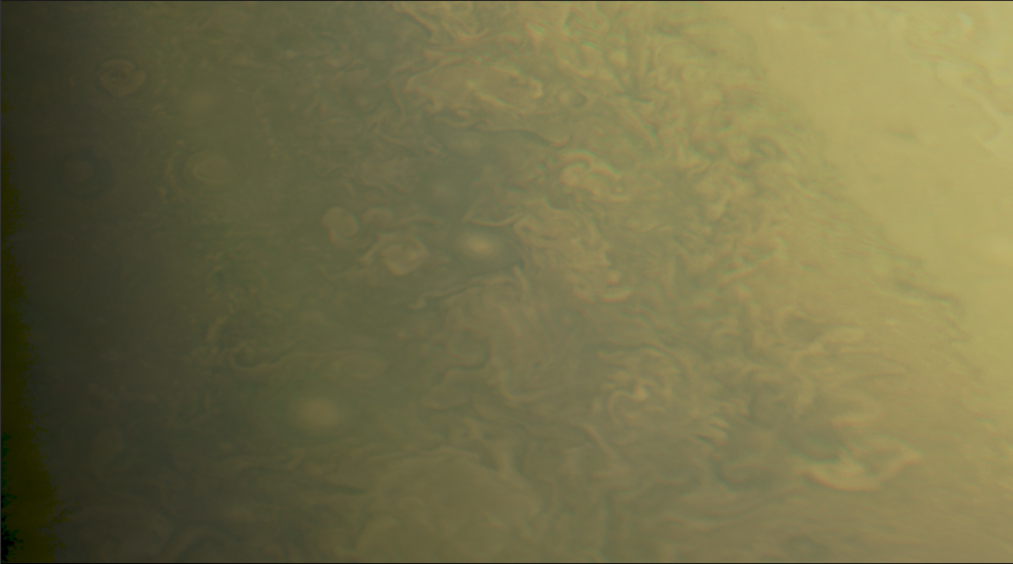

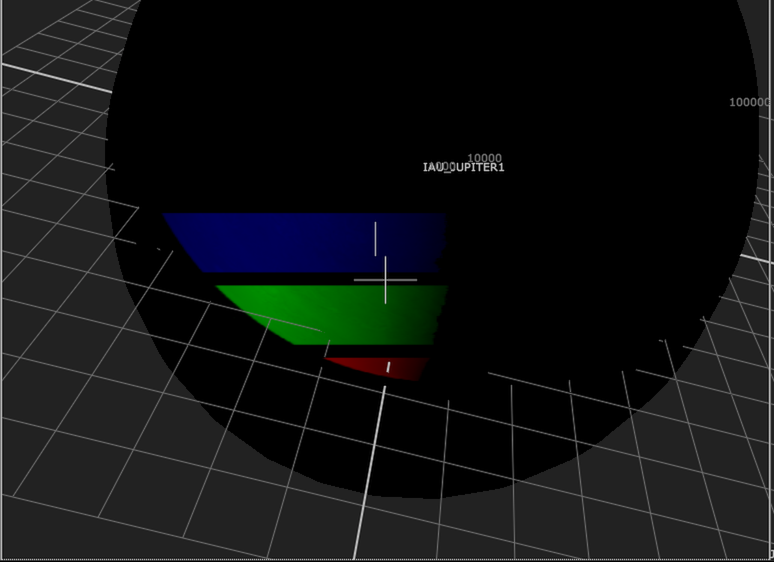

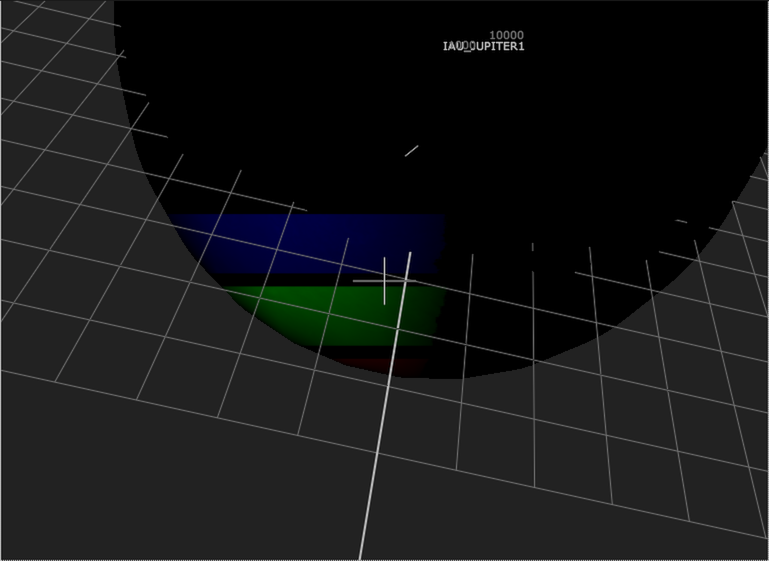

The frame transformations I'm calculating are currently : J2000 >> IAU_JUPITER >> JUNO_SPACECRAFT >> JUNO_JUNOCAM_CUBE >> JUNO_JUNOCAM. Here's the snippet of Spiceypy code used : CODE #Calculate the rotation offset between J2000 and IAU_JUPITER rotationMatrix = spice.pxform("J2000", "IAU_JUPITER", exposureTimeET) rotationAngles = rotationMatrixToEulerAngles(rotationMatrix) j2000ToIAUJupiterRotations = radiansXYZtoDegreesXYZ(rotationAngles) #Get the positions of JUNO_SPACECRAFT relative to JUPITER in the IAU_JUPITER frame, ignoring corrections junoPosition, lt = spice.spkpos('JUNO_SPACECRAFT', exposureTimeET, 'IAU_JUPITER', 'NONE', 'JUPITER') #Get the rotation of JUNO_SPACECRAFT relative to the IAU_JUPITER frame rotationMatrix = spice.pxform("IAU_JUPITER", "JUNO_SPACECRAFT", exposureTimeET) rotationAngles = rotationMatrixToEulerAngles(rotationMatrix) junoRotations = radiansXYZtoDegreesXYZ(rotationAngles) #Get the rotation of the JUNO_JUNOCAM_CUBE to the SPACECRAFT, based upon data in juno_v12.tf rotationMatrix = np.matrix([[-0.0059163, -0.0142817, -0.9998805], [0.0023828, -0.9998954, 0.0142678], [-0.9999797, -0.0022981, 0.0059497]]) rotationAngles = rotationMatrixToEulerAngles(rotationMatrix) junoCubeRotations = radiansXYZtoDegreesXYZ(rotationAngles) #Get the rotation of JUNO_JUNOCAM to JUNO_JUNOCAM_CUBE, based upon data in juno_v12.tf junoCamRotations = [0.583, -0.469, 0.69] The resultant position coordinates and euler angles are then transferred to a matching hierarchy of objects in 3D. The result is a JUNO_JUNOCAM 3D camera that should in theory be seeing exactly what is shown in the matching original exposure from steps 1-2. Using the 8th exposure (calculated time 2017-05-19 07:07:11.520680) of this image taken during perijove 6, zooming in on the final projected result gives me the following image :  As you can see, the RGB alignment is close, but not quite perfect. After doing some additional experimentation, I realised that the solution was to add an additional X rotation of -0.195° to the camera for exposure 1, -0.195°*2 for exposure 2, -0.195°*3 for exposure 3 etc. Applying this additional rotation gives me the following image :  Still not perfect as this value is no doubt slightly inaccurate, but its certainly a lot closer. The consistency of this additional rotation (plus the fact that the value required changes between different raw images) makes me think that Im perhaps missing something obvious in my code above! (I am admittedly not yet compensating for planetary rotation but I wouldn't expect that to cause a misalignment of this magnitude). Problem 2 is that in addition to the alignment offset between exposures, there is another global alignment offset across the entire image-set. Looking again at the 8th exposure, this time in 3D space, we see the following :  The RGB bands are from our single exposure, which has been projected through our 3D JunoCam onto the black sphere, which is my stand-in for Jupiter (sitting in the exact centre of the 3D space). As you can see, there is an alignment mismatch. After yet more experimentation, I found that applying a rotation of approximately [-9.2°, 1.7°, -1.2°] to the entire range of exposures (at the JUNOCAM level) more closely aligns our 8th exposure with it's expected position on Jupiter's surface, however this does introduce a very slight drift in subsequent exposures :  I hope that all makes some sense! I'm (probably fairly obviously) new to SPICE, but after reading multiple tutorials/forum posts elsewhere, I still can't seem to pinpoint the one or two things I'm no doubt misunderstanding! I'm incredibly excited about what's possible once I iron out the issues in this process, so if anyone has any suggestions, I'd certainly love to hear them! Thanks very much in advance! Matt |

|

|

|

|

Dec 18 2017, 04:09 PM Dec 18 2017, 04:09 PM

Post

#2

|

|

|

Member    Group: Members Posts: 146 Joined: 22-July 14 Member No.: 7220 |

I'm glad you figured out a solution! The stuff I've been working on has been producing map-projected images, but I think it might be a dead end for producing perspective output (which is my primary goal) unless I bring Maya or Blender into the mix. The boresight calculations are pretty good for the individual color channels, but like mcaplinger said, I've seen distortion around the extremes of the field of view.

|

|

|

|

Matt Brealey 3D Projecting JunoCam images back onto Jupiter using SPICE Dec 12 2017, 02:50 PM

Matt Brealey 3D Projecting JunoCam images back onto Jupiter using SPICE Dec 12 2017, 02:50 PM

Gerald With 80.84 exposures per Juno rotation you should ... Dec 12 2017, 06:24 PM

Gerald With 80.84 exposures per Juno rotation you should ... Dec 12 2017, 06:24 PM

mcaplinger QUOTE (Matt Brealey @ Dec 12 2017, 06:50 ... Dec 12 2017, 11:31 PM

mcaplinger QUOTE (Matt Brealey @ Dec 12 2017, 06:50 ... Dec 12 2017, 11:31 PM

Bjorn Jonsson Another possible issue is that at least in my case... Dec 13 2017, 01:03 AM

Bjorn Jonsson Another possible issue is that at least in my case... Dec 13 2017, 01:03 AM

Kevin Gill QUOTE (Bjorn Jonsson @ Dec 12 2017, 09:03... Dec 18 2017, 12:00 AM

Kevin Gill QUOTE (Bjorn Jonsson @ Dec 12 2017, 09:03... Dec 18 2017, 12:00 AM

mcaplinger QUOTE (Kevin Gill @ Dec 17 2017, 04:00 PM... Dec 18 2017, 01:45 AM

mcaplinger QUOTE (Kevin Gill @ Dec 17 2017, 04:00 PM... Dec 18 2017, 01:45 AM

Matt Brealey Hi Everyone,

Thanks so much for the responses... Dec 18 2017, 03:54 PM

Matt Brealey Hi Everyone,

Thanks so much for the responses... Dec 18 2017, 03:54 PM

Brian Swift Question for everyone with a raw pipeline using th... Dec 18 2017, 06:50 PM

Brian Swift Question for everyone with a raw pipeline using th... Dec 18 2017, 06:50 PM

mcaplinger QUOTE (Brian Swift @ Dec 18 2017, 10:50 A... Dec 19 2017, 05:31 PM

mcaplinger QUOTE (Brian Swift @ Dec 18 2017, 10:50 A... Dec 19 2017, 05:31 PM

Brian Swift QUOTE (mcaplinger @ Dec 19 2017, 09:31 AM... Dec 21 2017, 06:16 PM

Brian Swift QUOTE (mcaplinger @ Dec 19 2017, 09:31 AM... Dec 21 2017, 06:16 PM  |

|

Lo-Fi Version | Time is now: 20th September 2024 - 07:56 AM |

|

RULES AND GUIDELINES Please read the Forum Rules and Guidelines before posting. IMAGE COPYRIGHT |

OPINIONS AND MODERATION Opinions expressed on UnmannedSpaceflight.com are those of the individual posters and do not necessarily reflect the opinions of UnmannedSpaceflight.com or The Planetary Society. The all-volunteer UnmannedSpaceflight.com moderation team is wholly independent of The Planetary Society. The Planetary Society has no influence over decisions made by the UnmannedSpaceflight.com moderators. |

SUPPORT THE FORUM Unmannedspaceflight.com is funded by the Planetary Society. Please consider supporting our work and many other projects by donating to the Society or becoming a member. |

|